- The Google for Education Community Platform

- Higher Education Communities

- Find Groups

- ELP Higher Ed Leadership Program

- Discussions

- Re: The Opportunities and Risks of AI in Higher Ed...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

The Opportunities and Risks of AI in Higher Education

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-22-2025 06:40 AM - edited 05-22-2025 06:59 AM

An EDUCAUSE survey of higher education practitioners in the United States resulted in a synthesized a list of appropriate and inappropriate uses of AI in education (see below for summary). What do you think? Has anything changed in your institution and in general practice about ethical uses in the the year since these results were published? How do these results differ in your country?

The higher education community is still looking for common ground on how AI should and should not be used for learning and work. Respondents were asked to describe both appropriate and inappropriate uses of AI in higher education. In general, respondents emphasized the ethical and transparent use of AI, no matter the specific application. One respondent summarized, "AI should be embraced as an emerging technology and should have a place in coursework with focus on implementation, adoption, research, utilization, and ethical and legal considerations."

Appropriate Uses

- Personalized student support: tutoring, translating, academic/career advising, easing administrative processes, brainstorming, editing, accessibility tools and assistive technology

- Teaching assistant: course design, grading, providing feedback to students, providing feedback to faculty, improving accessibility of course materials

- Research assistant: finding and summarizing literature, sorting and analyzing data, predictive modeling, creating data visualizations

- Administrative assistant: automating tasks, drafting/revising communications (e.g., email), transcribing audio

- Learning analytics: analyzing and visualizing student success data, providing insights for student recruitment and retention

- Digital literacy education: preparing students to be part of the digital workforce and society

Inappropriate Uses

- Trusting generative AI outputs without human oversight

- Simulating human judgment (e.g., grading student work, peer reviewing academic articles, writing recommendation letters)

- Representing AI-generated work as one's own (e.g., using AI to write papers, take exams)

- Not citing AI as a resource for generated content

- Making high-stakes decisions (e.g., student admissions) without human oversight

- Conducting invasive data collection or surveillance

- Relying on AI tools in place of human thought and creativity

- Giving tools unauthorized access to sensitive data (e.g., PII) or intellectual property

Though these lists reflect areas of general agreement among respondents, it is clear that the higher education community has not found widespread agreement on the use of AI.

- Topics:

-

Artificial Intelligence

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-11-2025 08:28 AM

Hi, Karl!

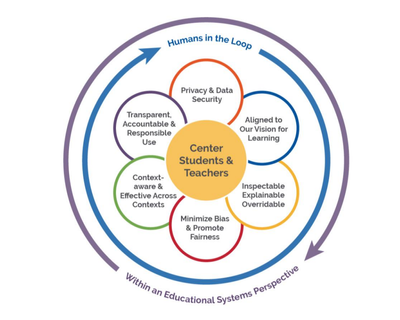

I don't know that I have anything to add to this list. It did bring to mind the graphic below from a report we put out when I was with the U.S. Department of education. If we are jumping to the doing of the thing, we run the risk of asking how we want the thing (in this case AI) to operate within our human-based systems.

Without shared expectations of how these tools/resources are at play within our learning communities, we are letting are somewhere between letting "a thousand flowers bloom" and hoping we escape the tragedy of the commons. It has been easy to shrug off much of the possible pedagogical implications of technologies over the last decade or two. In AI, though, we appear to have a moment where the conversation of are our shared expectations of the "actions and attributes" of technology in education is inescapable.

The full report can be found here - https://www.ed.gov/sites/ed/files/documents/ai-report/ai-report.pdf

Thank you for opening the conversation!